Building cost aware cloud based solutions is very different than the traditional IT approach. While developing applications targeted for traditional local data center, we use to plan to allocate servers/hardware to meet maximum capacity required by the application. Even if maximum capacity required by the application is only used for few hours/days in a year, even in that case we usually allocate maximum capacity hardware. We use to do that because allocating hardware within traditional data centers was a tedious and time consuming job.

With evolution of cloud, allocation of hardware is just a click away. So applying the traditional IT approach of allocating maximum capacity in cloud is not recommended and will not even be feasible from cost perspective, you will end up paying for idle and redundant resources most of the time, and will make it very-very expensive for you.

So there is a big difference in traditional IT and Cloud based resource allocation approaches. As per traditional IT approach, you plan for worst case scenario, but in cloud based approach, you plan for immediate requirement only, but plan for on-demand scale up for worst case scenario.

Now the question arises, how do you plan resources and do cost monitoring for cloud based applications. To do that, consider following points,

- Right Sizing – be Granular

- Make Consumption Cost one of your Architecture’s Quality Attribute

- Elasticity – Automate Scaling of Resources

- Tie Cost with Business Returns

- Continuous Monitoring

- Purchasing Options

- Architecture/Solution should be portable

Right Sizing – be Granular

While planning for capacity, be as much granular as much as you can. Each cloud service comes in different sizes, cost and features. There could be chances that multiple small instances will suffice your requirement, rather than one large instance, or vice versa. But how do you decide? You can decide only by understanding your requirements and by calculating expected load on the system.

Example

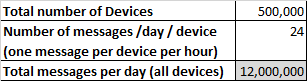

Suppose you are designing an IoT solution on Azure platform, and leveraging Azure IoT Hub for message ingress. IoT Hub comes in different flavors (free / S1 / S2 / S3), and each have its own pricing and limitations around number of messages supported per day. So question is which one to use and what would be expected price? For that you will have to do quick calculation to understand your message ingress load. Suppose you have half million devices and each device will send out one message per hour to the cloud. So total messages per day will become 12 million messages.

Now take this total number of messages and calculate how many number of units will be required for each S1, S2 or S3 to support this 12M message load per day.

Above calculation shows that 2 instance of S2 will easily suffice 12M messages load, and would be the cheapest too. Each cloud service is unique, so identifying right size for each service is first step towards cost optimization.

Make Consumption Cost one of your Architecture’s Quality Attribute

While architecting a solution, we consider usual quality attributes, such as Availability, Scalability, Security, Performance, Usability, etc. These are very well applicable for solutions either designed for cloud or traditional data centers. But for cloud, one more attribute plays a bigger role – cost of consumption. My suggestion is to consider “cost of consumption” as one of the architecture quality attribute and design solution accordingly.

There could be a scenario, where you may prefer to give priority to cost over other attribute such as performance. One such example I personally came across – we were using Azure Data Lake Analytics service for data analysis. Azure Data Lake Analytics is an on-demand analytics job service, where you only pay for the job’s running time and nothing else. In the first design approach, we gave priority to performance attribute and created couple of parallel running jobs, which were actually running on same data source, but doing different analytics. We achieved high performance, but the cost was high. Then we changed the approached and merged these jobs into one, which resulted in a slight latency in performance, but resulted in almost 75% of saving on cost.

Elasticity – Automate Scaling of Resources

Cloud brings the flexibility to allocate resources on demand whenever you need it and also release resources when you don’t require. As discussed earlier, allocate resources only what you need right now, but plan for automation to scale up when the demand increases. Almost all cloud providers provide this elasticity.

There are different ways application can be scaled,

- Horizontal Scaling – means adding or removing number of instances of resources, also called scale-in or scale-out. This type of scaling doesn’t usually require interruption to services or application. E.g. – increasing or decreasing number of instances for Azure AppService – https://docs.microsoft.com/en-us/azure/monitoring-and-diagnostics/insights-how-to-scale?toc=%2fazure%2fapp-service-web%2ftoc.json#scaling-based-on-a-pre-set-metric

- Vertical Scaling – means changing capacity of the resource, also called scale-up and scale-down. This usually results in interruption to service or application. Example – Scale up service tier of Azure AppService – https://docs.microsoft.com/en-us/azure/app-service/web-sites-scale#scale-up-your-pricing-tier

Tie Cost with Business Returns

Depending upon the nature of the application, cost of consumption of the application should justify the business need and returns from that application. E.g. suppose you are hosting an eCommerce application, and have configured auto-scaling for that. Now if user load increases, and application scales up, cost of consumption will also increase. But if the user load is increasing on an eCommerce application, than there should be increase in direct profit also, which should take care of increase in cost of consumption.

Now if the increase in cost of consumption is not proportional to the increase in business, then it’s time to revisit architecture, review each component and its cost consumption in details, i.e. going back to point #1 – be granular.

Continuous Monitoring

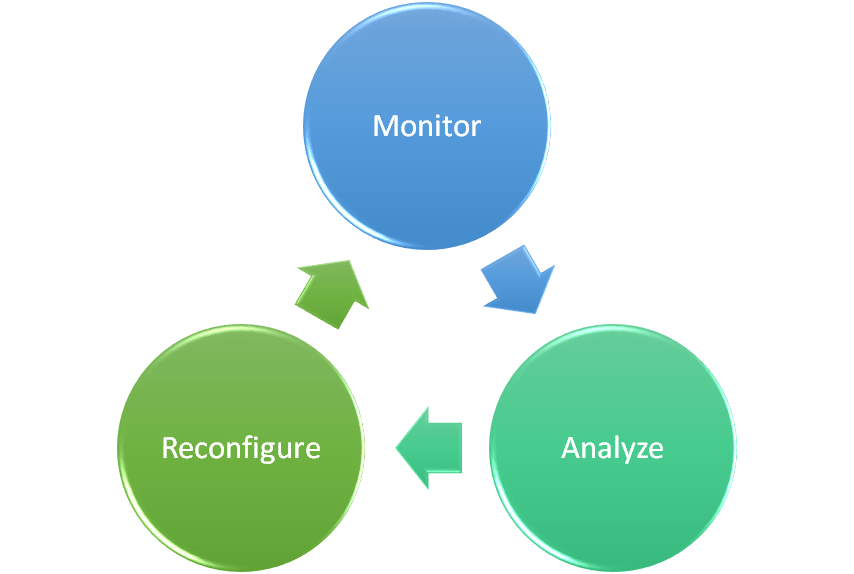

Until unless you monitor, you will not even know how much you are consuming, and may get a big surprise at the end of the month. Monitoring doesn’t mean monitoring at application level. Monitoring has to be at granular level, i.e. at each resource level which is getting consumed within an application. Best way is to tag each resource, and monitor its usage on daily basis. Based on the monitoring data, analyze consumption and identify optimization options. After identifying optimization options, reconfigure your deployment, and start monitoring again. So it’s a recursive cycle of Monitoring, Analyze, Reconfigure and monitor again.

Purchasing Options

Each cloud provider is getting innovative in pricing and coming up with very lucrative options, such Monthly Commitment (for Azure) or Reserve Capacity (for AWS), along with usual Pay as you Go model. But can you commit for capacity on the very first day? Answer is “No”, you should first start with Pay as you go model, observe or benchmark your application consumption in production/similar to production usage, and identity how much minimum commitment you want to go with. If you can identify your application usage pattern, you can easily leverage commitment offerings from cloud providers and save significant amount.

Architecture/Solution should be portable

Cloud is evolving, new services are getting launched every day or every week. That time is gone, when you use to design solution once and expect it to run for next 10 years uninterrupted. Not only new cloud services, but chances are even for existing cloud services there may be new plans or SLAs been offered. So your architecture need to be agile and flexible to adopt new changes and leverage benefits of new services. Example, if your architecture is designed for microservices patterns, than you can leverage serverless cloud computing like Azure Functions or AWS Lambda.

So in short, cost optimization for cloud based solution starts from the Architecture phase itself, and continue during its life cycle in production usage. It’s not a one-time activity, it’s an on-going activity, where everyone contributes, be it architect, designer, developer, tester, and support engineer.

Leave a comment